In the old days - memory wasn't virtualized or protected and any code could write anywhere. But let me repeat: this is an extremely unusual situation that might occur in something like a specialized scientific data-crunching project but would almost never apply to common business/corporate/enterprise scenarios. In such a case we come back to your original Question: In this special situation you would indeed want to constrain the operations to have no more of these hyper-active threads than you have physical cores. So there is an option in the CPU firmware where a sysadmin can throw a switch on the machine to disable SMT if she decides it would benefit her users running an app that is unusually CPU-bound with very few opportunities for pausing. Like three balls on a playground being shared between nine kids, versus sharing between nine hundred kids where no one kid really gets any serious playtime with a ball. Too many CPU-intensive threads all clamoring for execution time to be scheduled on a core can make the system inefficient, with no one thread getting much work done. So a SMT enabled CPU with four physical cores, for example, will present itself to the OS as an eight-core CPU.ĭespite this optimization, there is still some overhead to switching between such virtual cores. This works so well that the each core presents itself as a pair of virtual cores to the OS. While the pair of threads does not actually execute simultaneously, the switching is so smooth and fast that both threads appear to be virtually simultaneous. Known generically as simultaneous multi-threading (SMT). Intel calls their technology Hyper-Threading.

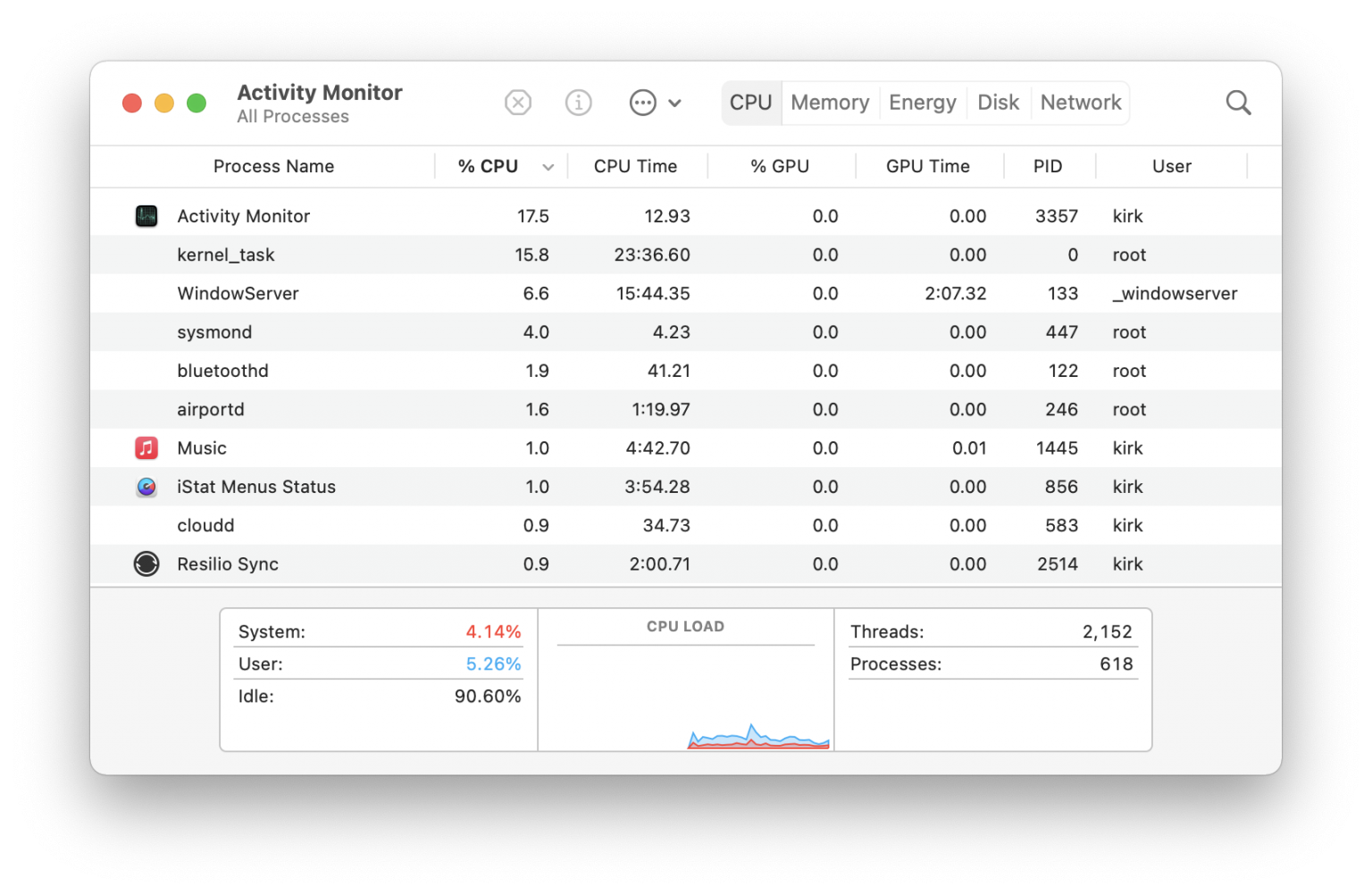

Some CPU makers have developed technology to cut this time so as to make switching between a pair of threads much faster. Part of the operating system’s job is to try to be smart in scheduling the threads as to optimize around this overhead cost. There is a significant cost in time to unload the current thread’s instructions and data from the core and then load next scheduled thread ’s instructions and data. One issue there is the overhead cost of switching between threads when scheduled by the OS. Simultaneous Multi-ThreadingĪs for your linked Question, threads there are indeed the same as here. And if running a “tight” loop that is CPU-intensive with no cause to wait on external resources, the programmer can insert a call to volunteer to be set aside briefly so as to not hog the core and thereby allow other threads to execute.įor more detail, see the Wikipedia page for multithreading. The app programmer can assist this scheduling operation by sleeping her thread for a certain amount of time when she knows the wait for the external resource will take some time. With almost nothing to do but check the status of the awaited resource, such threads are scheduled quite briefly if at all. Likewise, many threads may be waiting on some resource such as data to be retrieved from storage, or a network connection to be completed, or data to be loaded from a database. Many threads have no work to do, and so are left dormant and unscheduled. Round and round, threads execute a little bit at a time, not all at once.Ī major responsibility of the operating system (Darwin and macOS) is the scheduling of which thread is to be executed on which core for how long. One core runs a bit of the thread, then sets it aside to execute a bit of another thread. Your 1,805 threads do not run simultaneously.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed